AI Performs Comparable to Humans in Reading Mammograms

Images

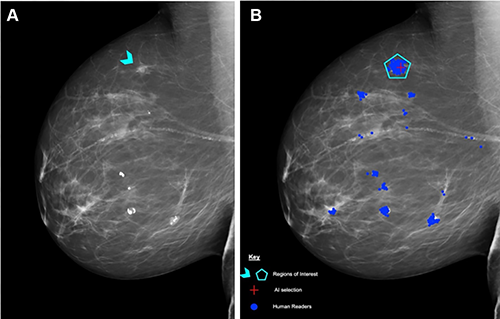

Researchers in the UK used a standardized assessment to compare the performance of a commercially available artificial intelligence (AI) algorithm with human readers of screening mammograms and found them to be comparable. The results were published in Radiology.

To improve the sensitivity and specificity of screening mammography, one solution is to have two readers interpret every mammogram. According to the researchers, double reading increases cancer detection rates by 6 to 15% and keeps recall rates low. However, this strategy is labor-intensive and difficult to achieve during reader shortages.

"There is a lot of pressure to deploy AI quickly to solve these problems, but we need to get it right to protect women's health," said Yan Chen, PhD, professor of digital screening at the University of Nottingham, United Kingdom.

Prof Chen and her research team used test sets from the Personal Performance in Mammographic Screening, or PERFORMS, quality assurance assessment utilized by the UK's National Health Service Breast Screening Program (NHSBSP), to compare the performance of human readers with AI. A single PERFORMS test consists of 60 challenging exams from the NHSBSP with abnormal, benign and normal findings. For each test mammogram, the reader's score is compared to the ground truth of the AI results.

"It's really important that human readers working in breast cancer screening demonstrate satisfactory performance," she said. "The same will be true for AI once it enters clinical practice."

The research team used data from two consecutive PERFORMS test sets, or 120 screening mammograms, and the same two sets to evaluate the performance of the AI algorithm. The researchers compared the AI test scores with the scores of the 552 human readers, including 315 (57%) board-certified radiologists and 237 non-radiologist readers consisting of 206 radiographers and 31 breast clinicians.

"The 552 readers in our study represent 68% of readers in the NHSBSP, so this provides a robust performance comparison between human readers and AI," Prof Chen said.

Treating each breast separately, there were 161/240 (67%) normal breasts, 70/240 (29%) breasts with malignancies, and 9/240 (4%) benign breasts. Masses were the most common malignant mammographic feature (45/70 or 64.3%), followed by calcifications (9/70 or 12.9%), asymmetries (8/70 or 11.4%), and architectural distortions (8/70 or 11.4%). The mean size of malignant lesions was 15.5 mm.

No difference in performance was observed between AI and human readers in the detection of breast cancer in 120 exams. Human reader performance demonstrated mean 90%sensitivity and 76% specificity. AI was comparable in sensitivity (91%) and specificity (77%) compared to human readers.

"The results of this study provide strong supporting evidence that AI for breast cancer screening can perform as well as human readers," Prof Chen said.

Prof Chen said more research is needed before AI can be used as a second reader in clinical practice.

"I think it is too early to say precisely how we will ultimately use AI in breast screening," she said. "The large prospective clinical trials that are ongoing will tell us more. But no matter how we use AI, the ability to provide ongoing performance monitoring will be crucial to its success."

Prof Chen said it's important to recognize that AI performance can drift over time, and algorithms can be affected by changes in the operating environment.

"It's vital that imaging centers have a process in place to provide ongoing monitoring of AI once it becomes part of clinical practice," she said. "There are no other studies to date that have compared such a large number of human reader performance in routine quality assurance test sets to AI, so this study may provide a model for assessing AI performance in a real-world setting."

Related Articles

Citation

AI Performs Comparable to Humans in Reading Mammograms. Appl Radiol.

September 5, 2023